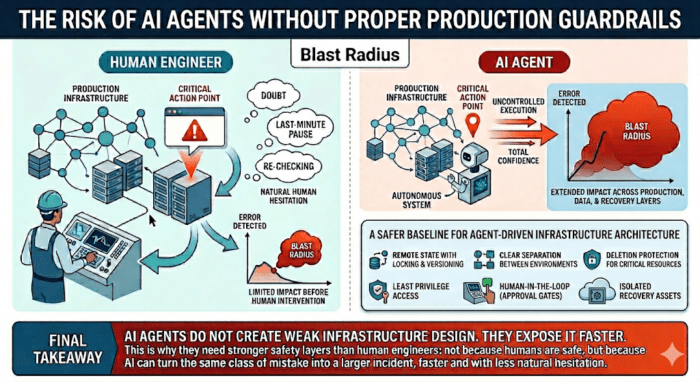

Human engineers make mistakes.

AI agents can make the same mistakes faster and extend their impact further before anyone notices.

Weak production guardrails become much more dangerous when risky actions are executed without natural human hesitation.

Infrastructure automation depends on accurate state, controlled execution, and predictable change workflows. If those are weak, automated actions may be based on an incomplete or incorrect view of the environment.

Critical production resources should not be easy to delete. Mature environments make destructive operations difficult, explicit, and reviewable.

Backups and recovery assets should be separated from the same execution path that manages primary infrastructure. Otherwise, one failure path can affect both production and recovery.

Humans often introduce accidental hesitation: re-checking, distraction, doubt, or a last-minute pause. AI agents do not do that unless the system forces them to. They operate with total confidence, even when their understanding of the context is incomplete. That makes approval gates, policy checks, and execution limits more important in agent-driven workflows.

None of these safety principles is new. In fact, they have been standard engineering practice for years. What changes with AI agents is not the principle, but the cost of neglecting it.

What might once have remained limited or recoverable can now escalate much faster because there is less human hesitation to slow execution down.

Blast radius

Another way to look at this is blast radius.

The problem is not that a system can make a mistake — it is how much of the system that mistake is allowed to affect.

When blast radius is not explicitly limited, a single automated action can propagate across production resources, data layers, and even recovery paths, turning what should be a localised error into a full system failure.

Teams using AI in infrastructure operations should, at a minimum, consider:

AI agents do not create weak infrastructure design.

They expose it faster.

That is why they need stronger safety layers than human engineers: not because humans are safe, but because AI can turn the same class of mistake into a larger incident, faster and with less natural hesitation.

De la conception à la production, je fournis des systèmes fiables et évolutifs avec une complexité réduite et des coûts optimisés.